Building a master volumes UI with impromptu

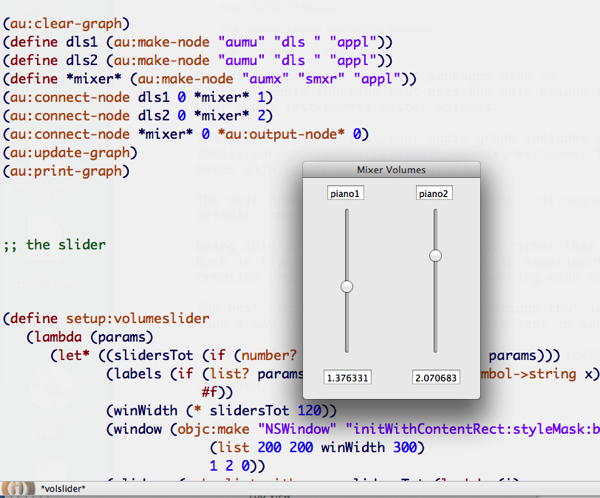

Based on one of the examples packaged with Impromptu, I wrote a simple function that uses the objc bridge to create a bare-bones user interface for adjusting your audio instruments master volumes.

Using the objc bridge

The script assumes that your audio graph includes a mixer object called *mixer*. The UI controllers are tied to that mixer's input buses gain value.

The objc bridge commands are based on the silly-synth example that comes with the default impromptu package.

Being able to control volumes manually rather than programmatically made a great difference for me. Both in live coding situations and while experimenting on my own, it totally speeds up the music creation process and the ability of working with multiple melodic lines.

The next step would be to add a midi bridge that lets you control the UI using an external device, in such a way that the two controllers are kept in sync too. Enjoy!

P.s.: this is included in the https://github.com/lambdamusic/ImpromptuLibs

Cite this blog post:

Comments via Github:

See also:

2021

2017

paper Data integration and disintegration: Managing Springer Nature SciGraph with SHACL and OWL

Industry Track, International Semantic Web Conference (ISWC-17), Vienna, Austria, Oct 2017.

paper Fitting Personal Interpretation with the Semantic Web: lessons learned from Pliny

Digital Humanities Quarterly, Jan 2017. Volume 11 Number 1

2015

2014

International Semantic Web Conference (ISWC-14), Riva del Garda, Italy, Oct 2014.

2013

paper Fitting Personal Interpretations with the Semantic Web

Digital Humanities 2013, University of Nebraska–Lincoln, Jul 2013.

2012

2011

2010

2009

2007

paper PhiloSURFical: browse Wittgensteinʼs Tractatus with the Semantic Web

Wittgenstein and the Philosophy of Information - Proceedings of the 30th International Ludwig Wittgenstein Symposium, Kirchberg, Austria, Aug 2007. pp. 319-335